My Automated New York

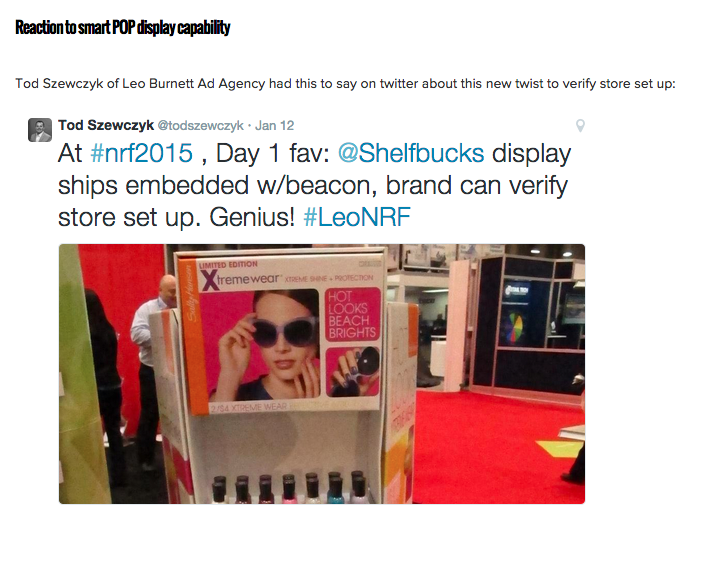

This post originally appeared on Leo Burnett's website on February 25, 2016. This was the first submission that I did for the LeoScope initiative.

My Automated New York

How robots are being used in retail and what it means for brands and consumers

I had an interesting and somewhat automated January. Coming off a long family road trip over the holidays (two weeks, five cities, eight states, 3,000 miles), my first week back to work was spent on the road again traveling to the Consumer Electronics Show in Las Vegas.

Nearly everyone there seemed to be wearing some version of a virtual reality headset, drones had taken over a large wing of one of the halls and robots were scattered throughout the event.

Then, a week later, I was off to New York for the National Retail Federation Show, where I met SoftBank’s robot, Pepper, via RobotLAB’s Fashion Recommendation system and had a chance to use Best Buy’s robot, Chloe, at its 23rd Street store. These encounters with automation struck me as not quite an inflection point but a reflection of the state of service robots and how they will be used to enhance consumer experiences.

But before we get too far in, I’d like to take a quick moment to offer a disclaimer about what will and will not be covered in the following few paragraphs. Robots have a polarizing effect on us—we either love themor loathe them. They can be seen as the harbinger of a great economic upheaval and fundamental rewriting of labor distribution. In the extreme, some fear they will bring a Skynet future to us faster than we might imagine. Conversely, others argue that robots are helping us enter a new era of job creation. My interest here is in exploring the beginnings of how they are being used in retail, and not whether any real-world John Connor has anything to fear.

At the NRF show, RobotLAB, a start-up out of San Francisco, demonstrated how product recommendation would be amplified by infusing its software into Pepper for a highly interactive experience. Shoppers simply scan a QR code on a tag using the camera in Pepper’s head to start the process. Pepper can compare a shopper’s body type with a clothing item and make a recommendation on fit, suggesting alternatives if the particular item is not appropriate. While not explicitly mentioned, Pepper could one day store all of the product recommendations and similar items for retargeting later with follow-up offers on sales. Pepper is a robot with the ability to integrate clicks-to-bricks thinking.

While the RobotLAB demo was just that, SoftBank, the maker of Pepper, will be opening a cellphone store run by robots next month in Japan. Clearly a test, Pepper will assist in demonstrating products and making purchase decisions. People will be part of the store experience as well, but only to ensure that the robots are running properly. Will the utility of impartial product recommendation outweigh the creepiness some may feel in interacting with robots?

While I had read about Best Buy’s installation of Chloe, all of the images seemed to portray a giant yellow arm that would simply reach back into a two-story-high, floor-to-ceiling rack for an item. I suppose the adage that people look taller in movies applies to robots as well. Chloe’s claw stands maybe two feet tall off a platform that resembles an automated flying carpet.

Touchscreen kiosks are positioned in the store and in a vestibule outside. In either case, you order and pay for your item (CD, DVD, phone accessory) at the kiosk, Chloe acknowledges your order with a smiley face on its LED screen and flies away to fetch it from the racks. As it descends from the sky, the item I purchased (David Bowie’s “Blackstar” CD) was in a thin plastic sleeve directly under the LED screen. Chloe dumped the CD into a tube that delivered the item with a clunk in the bin at my feet while he/she/it stuck its tongue out at me in a bit of anthropomorphic celebratory acknowledgement.

Undoubtedly a great deal of fun retail theater, Chloe also represents the ability to buy anything at all times of day, even after the store closes. Imagine a future where these types of virtual storefronts are all we shop at, never having to go into a store. While the argument that we can already get almost anything delivered to us immediately now and in the future is valid, I purchased the disc as a remembrance of a favorite artist, and that type of purchasing behavior is likely to survive.

So what does this mean for brands and consumers?

First, the future is here. Brands are already using robots in stores, and we will see them pop up in more and more places. Sephora and Lowe’shave already deployed robots, and Nestlé plans to roll them out this year. Turns out, the less human a robot looks, the more willing we are to accept them. This phenomenon is called the Uncanny Valley effect, and it most likely means that we will see more Chloe/Pepper-type robots than lifelike ones such as Toshiba’s Aiko Chihira.

Second, moving past appearance, the artificial intelligence that powers robots in stores will truly be a game changer. While Chloe was simply fetching an item I requested, RobotLAB’s Pepper was recommending things to me. It’s not too much of a stretch to see the next step in this being more complicated, conversational experiences and even entertainment. What would you say to your favorite brand and how would the brand respond back to you in real time through the speaker/mouth of a robot?

Last, with every new evolution in communication technology (print, radio, television, Internet, mobile, virtual reality), people and brands have adapted, changed and evolved. Will we consider robots to be the next step in this progression, a new digital/physical platform to reach consumers in a physical space? Will we start to develop new strategies and tactics that facilitate engaging, entertaining and useful experiences? Will robots help attract people back to stores due to their entertainment value? It’s all too soon to tell, but one thing sticks out in my mind: that tongue that Chloe wagged at me as if to say, hey, we are in this together.